Part 1 Advanced The Market Maker’s Exchange Checklist (Liquidity, Latency, and Risk Controls) Market makers and HFT desks: evaluate exchanges on execution quality, liquidity, latency, fees, margin, and security — with a WhiteBIT walkthrough. Open guide

Part 1 Advanced The Market Maker’s Exchange Checklist (Liquidity, Latency, and Risk Controls) Market makers and HFT desks: evaluate exchanges on execution quality, liquidity, latency, fees, margin, and security — with a WhiteBIT walkthrough. Open guide Op-ed: Exploring the boundaries of simulated consciousness with LMMs

The advent of LLM-driven artificial intelligence may set the stage for an evolutionary experiment with cellular automata akin to Conway's Game of Life, but amplified by an order of magnitude.

Cover art/illustration via CryptoSlate. Image includes combined content which may include AI-generated content.

Can AI systems like OpenAI's GPT4, Anthropic's Claude 2, or Meta's Llama 2 help identify the origins and nuances of the concept of ‘consciousness?'

Advances in computational models and artificial intelligence open new vistas in understanding complex systems, including the tantalizing puzzle of consciousness. I recently wondered,

“Could a system as basic as cellular automata, such as Conway's Game of Life (GoL), exhibit traits akin to ‘consciousness' if evolved under the right conditions?”

Even more intriguingly, could advanced AI models like Large Language Models (LLM) aid in identifying or even facilitating such emergent properties? This op-ed explores these questions by proposing a novel experiment that seeks to integrate cellular automata, evolutionary algorithms, and cutting-edge AI models.

The idea that complex systems can emerge from simple rules is a tempting prospect for researchers in fields ranging from biology to artificial intelligence. In particular, whether “consciousness” can evolve from simple cellular automata systems, like Conway's Game of Life, poses ethical and philosophical dilemmas.

Consciousness evolved

Consciousness is a subject that has fascinated philosophers, neuroscientists, and theologians alike. However, the concept is yet to be fully understood. On the one hand, we have traditional views that align consciousness with the notion of a “soul,” sometimes positing divine creation as the source. On the other hand, we have emerging perspectives that see consciousness as a product of complex computation within our biological “hardware.”

The advent of advanced AI models like Large Language Models (LLMs) and Large Modal Models (LMMs) has transformed our ability to analyze and understand complex data sets. These models can recognize patterns, predict, and offer remarkably accurate insights. Their application is no longer restricted to natural language processing but extends to various domains, including simulations and complex systems. Here, we explore the potential of integrating these AI models with cellular automata to identify and understand forms of artificial ‘consciousness.'

Conway's Game of Life

Inspired by the pioneering work in cellular automata, notably Conway's Game of Life (GoL), I propose an experiment that adds layers of complexity to these cells, imbuing them with rudimentary genetic codes, neural networks, and even Large Multimodal Models (LMMs) like GPT4-V. The goal is to see if any form of fundamental “consciousness” evolves within this dynamic and interactive environment.

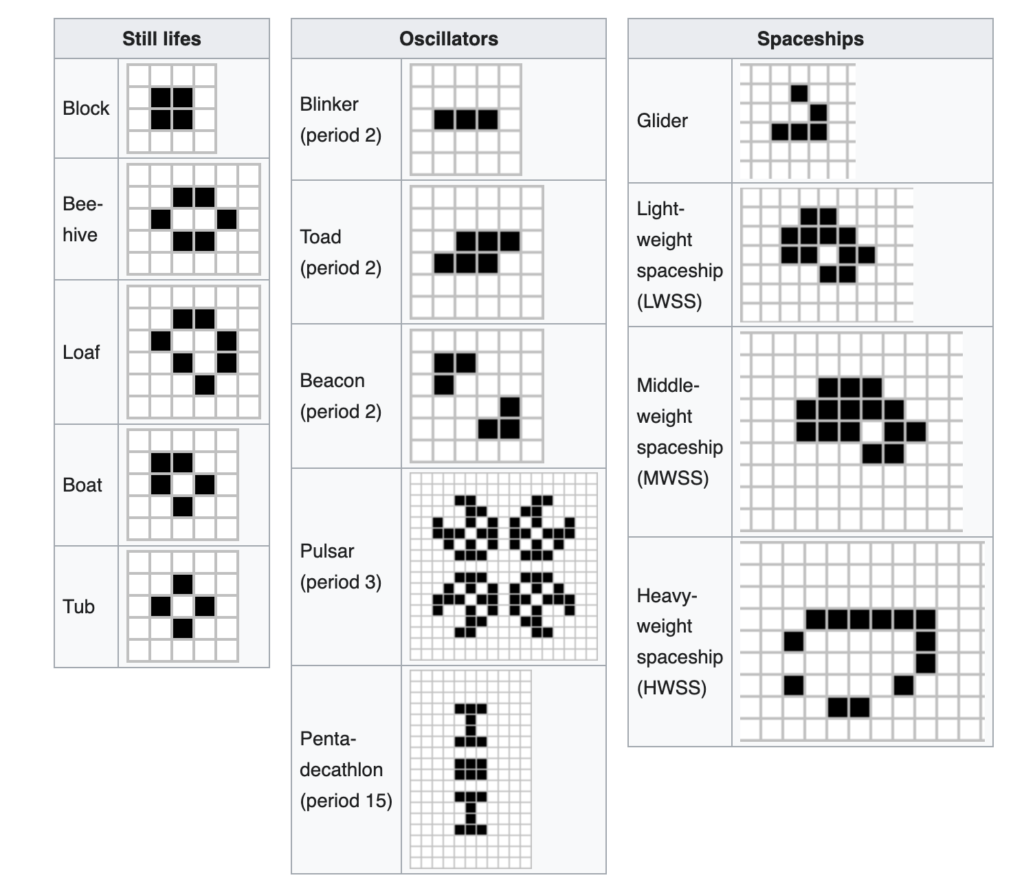

Conway's GoL is a cellular automaton invented by mathematician John Conway in 1970. It's a grid of cells that can be either alive or dead. The grid evolves over discrete time steps according to simple rules: a live cell with 2 or 3 live neighbors stays alive; otherwise, it dies. A dead cell with exactly 3 live neighbors becomes alive.

Below are some examples of patterns that have been discovered within GoL.

Despite its simplicity, the game demonstrates how complex patterns and behaviors can emerge from basic rules, making it a popular model for studying complex systems and artificial life.

The Experiment: A New Frontier

This makes it an ideal foundation for the experiment, offering a platform to investigate how fundamental consciousness could evolve under the right conditions.

The experiment would create cells with richer attributes like neural networks and genetic codes. These cells would inhabit a dynamic grid environment with features like hazards and food. An evolutionary algorithm involving mutation, recombination, and selection based on a fitness function would allow cells to adapt. Reinforcement learning could enable goal-oriented behavior.

The experiment would be staged, beginning with establishing cell complexity and environment rules. Evolution would then be introduced through mechanisms like genetic variation and selection pressures. Later stages would incorporate learning algorithms enabling adaptive behavior. Extensive metrics, data logging, and visualizations would monitor the simulation's progress.

Running the simulation long-term could reveal emergent complexity and signs of fundamental consciousness, though the current understanding of consciousness makes this speculative. The value lies in systematically investigating open questions around consciousness' origins through evolution and learning.

Rigorously testing hypotheses that incorporate fundamental mechanisms thought to be involved could yield theoretical insights, even if full consciousness does not emerge. As a simplified yet expansive evolution model, it allows for examining these mechanisms in isolation or combination.

Success could motivate new directions like exploring alternative environments and selection pressures. Failure would also be informative.

Integrating GPT-4-like models

Integrating a model akin to GPT-4 into the experiment could provide a nuanced layer to the study of consciousness. Currently, GPT-4 is a feed-forward neural network designed to produce output based solely on predetermined inputs.

The model's architecture may need to be fundamentally extended to allow for the possibility of evolving consciousness. Introducing recurrent neural mechanisms, feedback loops, or more dynamic architectures could make the model's behavior more analogous to systems displaying rudimentary awareness forms.

Furthermore, learning and adaptation are vital elements in developing complex traits like consciousness. In its existing form, GPT-4 cannot learn or adapt after its initial training phase. Therefore, a reinforcement learning layer could be added to the model to enable adaptive behavior. Although this would introduce substantial engineering challenges, it could prove pivotal in observing evolution in simulated environments.

The role of sensory experience in consciousness is another facet worth exploring. To facilitate this, GPT-4 could be interfaced with other models trained to process different types of sensory data, such as visual or auditory inputs. This would offer the model a rudimentary ‘perception' of its simulated environment, thereby adding a layer of complexity to the experiment.

Another avenue for investigation lies in enabling complex interactions within the model. The phenomenon of consciousness is often linked to social interaction and cooperation. Allowing GPT-4 to engage with other instances of itself or with different models in complex ways could be crucial in observing emergent behaviors indicative of consciousness.

Self-awareness or self-referential capabilities could also be integrated into the model. While this would be a challenging feature to implement, having a form of ‘self-awareness,' however rudimentary, could yield fascinating results and be considered a step toward fundamental consciousness.

Ethical considerations become crucial since the end goal is to explore the possible emergence of consciousness. Rigorous oversight mechanisms need to be established to monitor ethical dilemmas such as simulated suffering, raising questions that extend into philosophy and morality.

Philosophical, practical, and ethical considerations

The approach doesn't merely pose scientific questions; it dives deep into the philosophy of mind and existence. One intriguing argument is that if complex systems like consciousness can emerge from simple algorithms, then simulated consciousness might not be fundamentally different from human consciousness. After all, aren't humans also governed by biochemical algorithms and electrical impulses?

The experiment opens up a Pandora's Box of ethical considerations. Is it ethical to potentially create a form of simulated consciousness? To navigate these treacherous waters, consulting with experts in ethics, AI, and potentially law would likely be needed to establish guidelines and ethical stop-gaps.

Further, introducing advanced AI models like LLMs into the experimental setup complicates the ethical landscape. Is it ethical to use AI to explore or potentially generate forms of simulated consciousness? Could an AI model become a stakeholder in the ethical considerations?

Practically, running an experiment of this magnitude would require significant computational power. While cloud computing resources are an option, they come with their costs. For this experiment, I've estimated local computing costs using high-end GPUs and CPUs for 24 months, ranging from around $21,000 to $24,000, covering electricity, cooling, and maintenance.

Measuring ‘consciousness'

Measuring the emergence of consciousness would also require the development of rigorous quantitative metrics. These could be designed around existing theories of consciousness, like Integrated Information Theory or Global Workspace Theory. These metrics would provide a structured framework for evaluating the model's behavior over time.

Corroborating the findings of this experiment with biological systems could offer a multidimensional perspective. Specifically, the computational model's behaviors and patterns could be compared to simple biological organisms generally considered conscious at some level. This would validate the findings and potentially offer new insights into the nature of consciousness itself.

This experiment, though speculative, could offer groundbreaking insights into the evolution of complex traits like consciousness from simple systems. It pushes the boundaries of what we understand about life, consciousness, and the nature of existence itself. Whether we discover the emergence of fundamental consciousness or not, the journey promises to be as enlightening as any potential destination.